This is going to be a long post about all things shader. I have been developing two main shaders for this project.

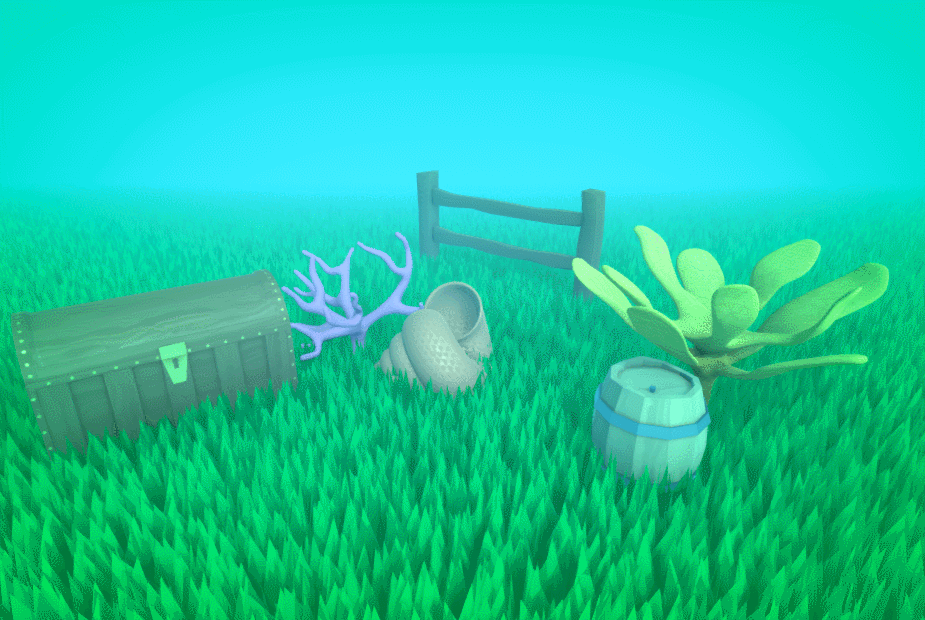

The Grass shader which I just added fog functionality too (The rest is just Harry’s grass shader source: https://halisavakis.com/my-take-on-shaders-grass-shader-part-ii/)

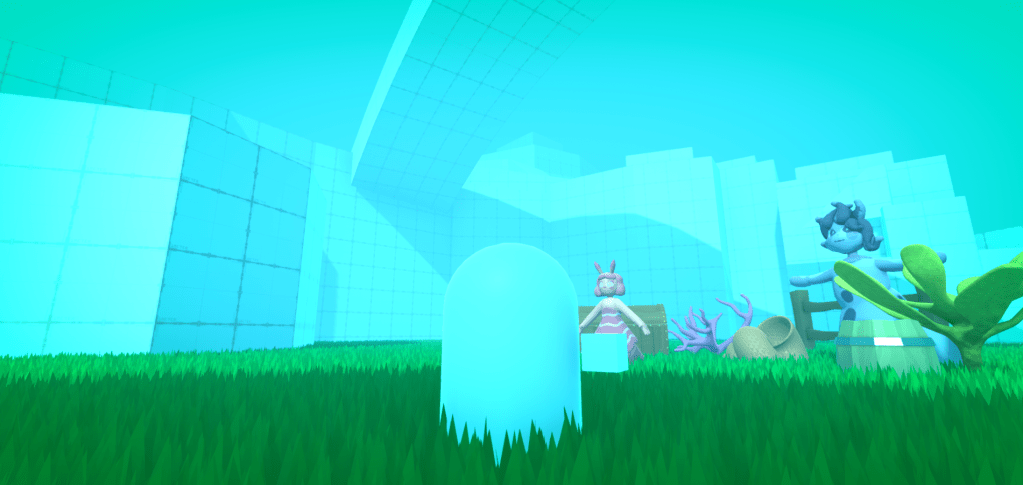

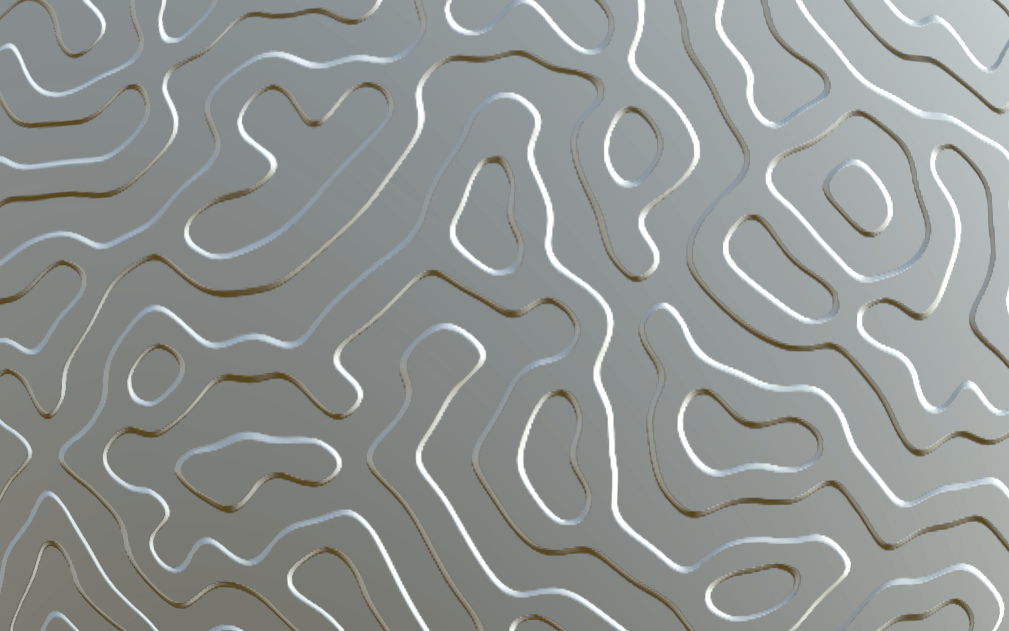

The second shader while inspired by Harry’s is actually built by your’s truly and I will thus take credit for it’s creation. As well as all the problems It has.

The Idea behind the grass shader was simply that I could create a shader that instead of making grass blades, rendered leaves. This would then allow me to add some leaves with wind interaction(Or water currents in our case) It would also be a lot more efficient than Actually importing leaves as geometry into the scene and then just using shaders for animation (which is what most games do).

I am going to try and go over everything I know about shaders here. As my understanding is much better than in my last post. I didn’t think That I would finish this shader in time for it to be included in the project which is why there isn’t more documentation up until this point.

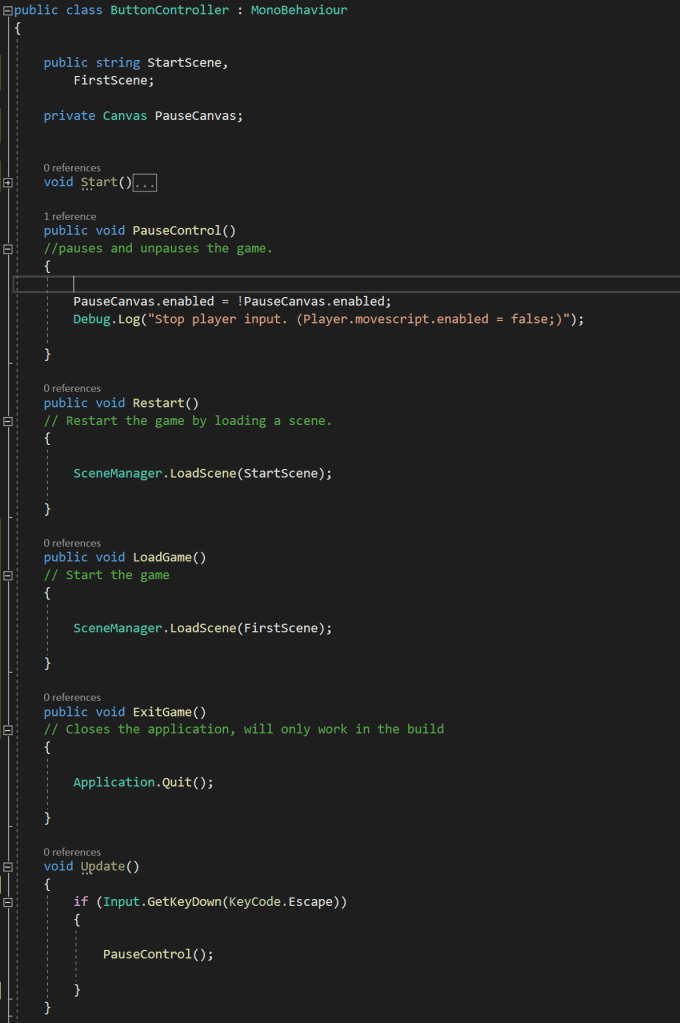

Most standard surface shaders use two main sub-shaders to do most of the work and achieve the effect they want.

- The Vertex shader. Which collects and can edit locations of vertices.

- And the Fragment shader. Which works using pixels and handles actually displaying our geometry to the screen. Here is where colour effects are handled.

The majority of the Shader is written in CG/HLSL, most of the documentation for which can be found

here: http://developer.download.nvidia.com/cg/index_stdlib.html

and here: https://docs.microsoft.com/en-us/windows/win32/direct3dhlsl/dx-graphics-hlsl

Stuff that isn’t CG is written in a syntax labelled as ShaderLab by Unity Documentation here: https://docs.unity3d.com/Manual/SL-Shader.html

The structure of most shader code is as follows.

Properties

{

Values defined for each material using the shader, allows the shader to be customization.

}

SubShader

{

CGINCLUDE (Specifies the beginning of the CG/HLSL code)

#include files. These are libraries for shaders, they usually contain Unity specific functions to use. You can write you own as well which allows multiple shaders to any helpful functions that you make

Structures. These are used to pass data between sub-shaders. They use HLSL semantics to identify what data you intend to pass to each parameter. Documentation for semantics: https://docs.microsoft.com/en-us/windows/win32/direct3dhlsl/dx-graphics-hlsl-semantics?redirectedfrom=MSDN

Property redefinition. As we are now working inside CG we have to re declare all our properties as their specific variable type in CG.

Shader specific Functions, we can write functions the same way that we do in C#. CG is basically C# you can read more and about that on the official CG documentation.

here: http://developer.download.nvidia.com/cg/Cg_language.html.

Sub-shaders, this is where we put our sub-shaders and where all the work gets done. Often the sub-shaders in CGinclude can be used, in which case you may find shaders missing certain sub-shaders from this section as they are instead defined in CGinclude.

ENDCG (end of our CG/HLSL code)

}

Pass (surface shaders in Unity use passes, the less passes the more efficient the shader)

{

Tags{‘tag’} tags in Shaderlab are used to communicate the intent of the pass to Unity’s rendering Engine. We can also communicate other non tag information here such as ‘CULL OFF’ which tells unity to render back faces.

CGPROGRAM

#pragma. The ‘#pragma’ syntax is used to define which sub-shader to use in this pass. We simply pass the name of the corresponding sub-shader we want to use. These can be form CGinclude or any other library or from our above code.

We can include any pass specific sub-shaders or function here if we want.

ENDCG

More Passes/Fallbacks. At the end of the shader we can define more passes as done in the pass above, or we can specify fallback shader configuration for Unity to default to if a users hardware does not support our current shader. You will often see the standard Unity Diffuse shader being used as a fallback.

}

Now you understand the basics of shaders in general I can explain to you in the next post the ins and outs of the grass shader I developed.